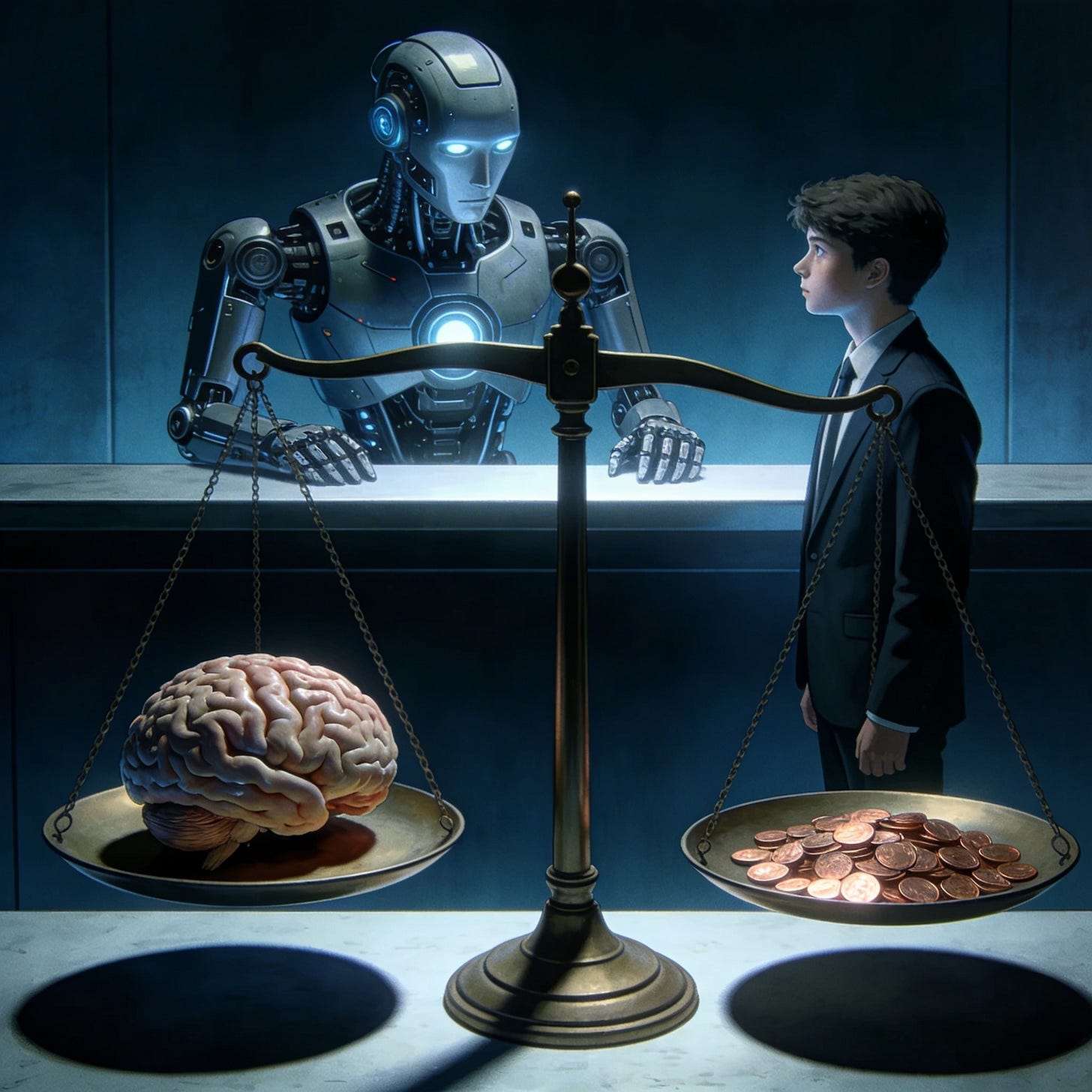

Youngsters Are Pawning Their Brains to AI

The Refusal to Do the Hard Work and The Curiosity Gap is Concerning

As a CTO and CEO, I do a lot of hiring. I speak with candidates across a diverse set of roles — engineers, designers, managers, strategists — you name it. Honestly, hiring is something I genuinely enjoy. It gives me a welcome break from the daily grind, a chance to meet interesting people, understand their stories and backgrounds, and quite often, an opportunity to learn something new myself.

However, over the last year or so, I’ve noticed a silent but deeply concerning trend — one that I believe deserves a much louder conversation than it’s currently getting.

The Interview Paradox

Candidates today appear remarkably sharp during interviews — especially online interviews. They articulate complex concepts with precision, answer curveball questions with near-perfect composure, and demonstrate what seems like extraordinary depth of knowledge.

And then they join the company.

The difference is staggering. The same individuals who dazzled us in interviews often struggle with fundamental problem-solving, independent thinking, and even basic tasks that should be well within their capability. It’s as if we hired one person but a completely different one showed up on Day 1.

What changed? Nothing about them changed. They simply no longer had their invisible co-pilot sitting in another tab.

The Uncomfortable Truth

Here’s what’s really happening: candidates — many, though certainly not all — are relying heavily on ChatGPT, Claude, Gemini, and other AI models. And I don’t mean as a supplementary tool. I mean for almost everything — their knowledge, their task execution, their decision-making, their opinions.

Let that sink in for a moment.

Interns are using AI as their interns — to fetch answers, draft solutions, and get the job done.

While that is comically ironic, it also exposes something far more serious: a growing mind gap and, frankly, a cultural gap in how the next wave of professionals is approaching work and learning.

The Real Problem: Outsourced Thinking

The issue isn’t that people are using AI. AI is a powerful tool, and I’d be a hypocrite to suggest otherwise. The issue is that people are substituting AI for thinking — entirely.

There is a world of difference between:

Using AI to accelerate your work, and

Using AI to replace your understanding

What I’m seeing with alarming frequency is the latter. Candidates are making themselves dangerously vulnerable to a technology they don’t fully understand and have no critical perspective on. They aren’t adding any layer of judgement, expertise, or even basic intuition to the solutions they present. There’s no “sixth sense” — that instinct you develop only through years of doing the hard, unglamorous work of actually learning something deeply.

They’re copying. They’re pasting. They’re presenting. And they’re calling it competence.

The Refusal to Do the Hard Work

Let’s be blunt: they are refusing to do the age-old hard work.

The hard work of sitting with a problem until it clicks. The hard work of failing, debugging, and failing again. The hard work of building mental models through repetition and struggle. The hard work of developing a point of view that is truly yours — earned, not generated.

This is the work that separates a professional from a pretender. And it’s being skipped at a scale I’ve never seen before.

The Missing Curiosity Gap

Perhaps what alarms me even more than the lack of effort is the shallowness of curiosity I’m witnessing in so many candidates these days.

There was a time when professionals — especially young, hungry ones — were driven by an almost restless need to understand the what, the how, and the why behind everything they touched. That itch to dig deeper, to pull the thread until the whole tapestry made sense — it was the engine of genuine expertise.

Today, that engine is sputtering. The emphasis has shifted overwhelmingly toward instant gratification — get the answer, ship the output, move on. The question is no longer “Why does this work?” but “Does it work? Good enough.”

And honestly, can we entirely blame them? This is probably a reflection of the abstraction of complexity in everyday life. Think about it — you order food without understanding supply chains, you hail rides without thinking about routing algorithms, you stream entertainment without a passing thought about the infrastructure that delivers it. You get mostly everything you need on a sort of autopilot. Everything just works. So why bother about the details? Why peek under the hood when the car drives itself?

That “why bother” mentality — harmless in everyday convenience — becomes catastrophic when it seeps into professional life. Because in the real world of building products, solving problems, and making consequential decisions, the details aren’t optional. The details are the job. And curiosity — deep, stubborn, sometimes inconvenient curiosity — is the only thing that gets you there.

Without it, you don’t have professionals. You have operators following scripts they didn’t write and don’t understand.

This Is NOT Just a “GenZ Problem”

Now, before anyone rushes to label this a generational issue — let me be clear: I don’t think this is a GenZ problem. It’s far more widespread than that. I’ve seen it across age groups and experience levels. The temptation to lean on AI as a cognitive crutch doesn’t discriminate by birth year.

But here’s the thought that genuinely keeps me up at night:

If this is where we are today — with professionals who at least had some years of education and early career experience without AI — then what happens with Gen Alpha? What about Gen Beta? What about generations that will never know a world where AI wasn’t the default answer to every question?

If we dismiss this as a generational quirk rather than addressing it as a fundamental shift in how humans relate to knowledge and effort, then we are not doing justice in our introspection

A Call to Reflect

To the young professionals reading this: AI is not your enemy. But it is also not your brain. It’s a tool — an extraordinarily powerful one — but a tool nonetheless. A chainsaw can help you clear a forest, but if you’ve never learned to identify which trees to cut, you’ll destroy the very thing you were meant to build.

Develop your own mind first. Build that foundation of deep knowledge, hard-won intuition, and independent judgement. Then use AI to amplify what you already bring to the table.

Because when the tab is closed and the chat window is gone, what remains is you — your thinking, your perspective, your ability to navigate ambiguity and complexity.

Make sure there’s something there worth hiring.

The sharpest tool is useless in the hands of someone who never learned the craft.